A GPT-3 Travel Agent, and Offline AI

Here's a small experiment: A GPT-3 Travel Agent. Type in a city and it will give you a list of tourism suggestions. I made it to see how easily you can perform structured information extraction from language models.

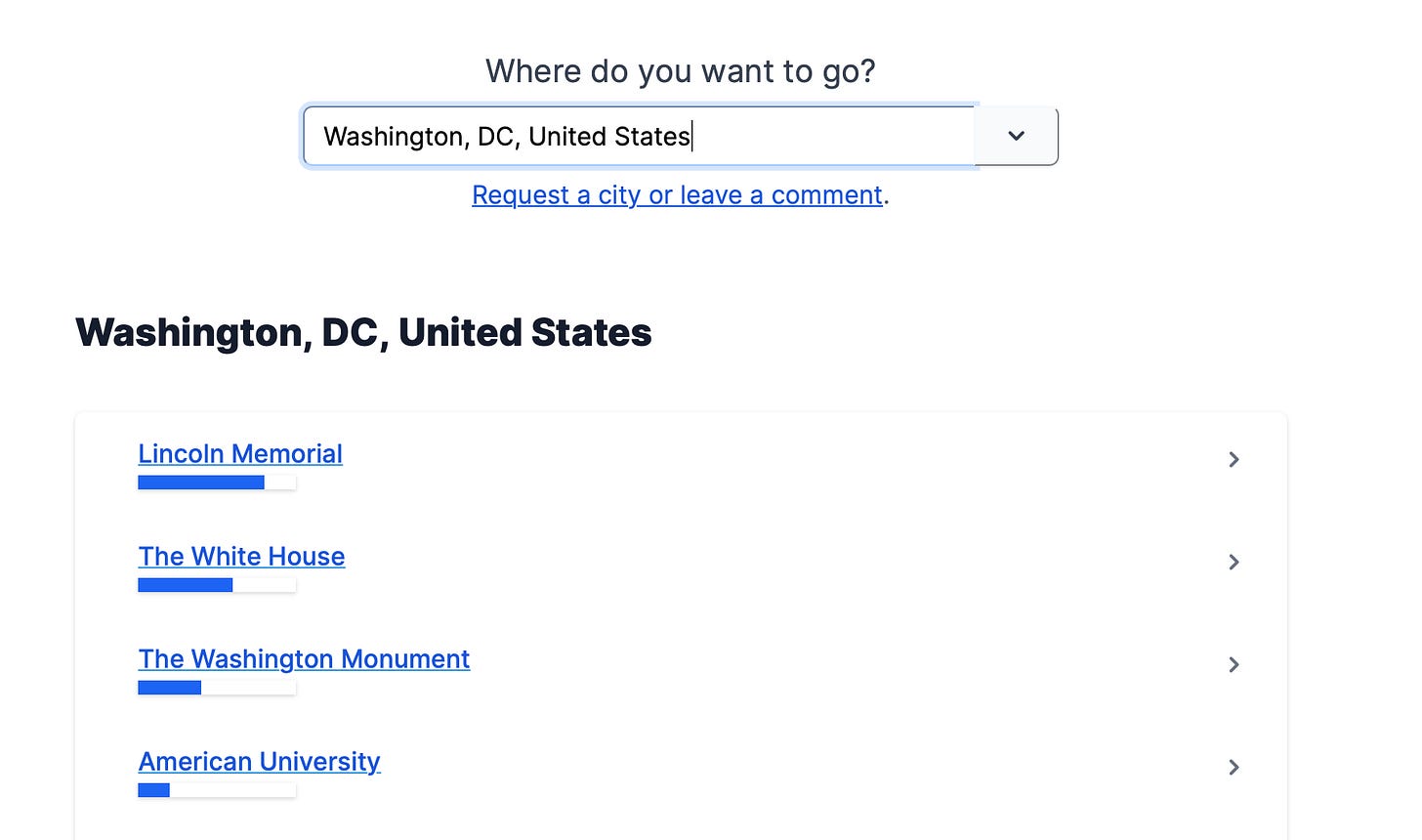

Here are example results for Washington, DC. The weighting is higher or lower depending on how many times GPT-3 generated that suggestion across multiple runs. These suggestions look decent to me, despite almost no parameter tuning on my part and just a bit of lightweight post-processing.

Some thoughts on Online v. Offline AI

We tend to think of AI-powered products as being connected live to the AI. Like Chatbots. Online AI makes sense when dynamic context is important or when the input domain is too large to precompute. But online AI also has problems: it's difficult & expensive to host & scale, and it's hard to impose quality control.

This demo, by contrast, has no AI in the serving loop. Everything is pre-computed offline with a set of scripts and data files that can be version & quality controlled. The code ends up looking a bit like a static web hosting framework, but for AI.

When the use case supports it, precomputing results like this makes tremendous sense to me. I suspect we'll see some interesting players in the "AI Content Pipeline" market. Humans will still act as editors and senior writers, while the AI fills the top of the funnel.

This is my first time playing with any sort of content generation, and I'm curious what's already out there in the wild. If you have any suggestions or links I should be looking at, let me know.